When I was eleven, Sir Clive Sinclair made my life a misery. I’d coped with not having the first two (pretty crappy) versions of his ZX computer, but when he brought out the ZX Spectrum in 1982, my family’s poverty cut like a knife.

It wasn’t just that we couldn’t afford Sky TV (not that it existed in the early 80s, but if it had, it would have been a pipe dream). We’d moved the year before to the council house where my dad still lives; he was looking for work, and we’d only managed to scrape through Christmas because my granddad had given us money for presents. So, things like personal computers were out of the question. I was ok(ish) with that: we’d never had money, so this was nothing new. The fact that we had a roof over our heads was a bonus, as we’d been homeless for a time the year before and had to shack up in my granddad’s one-bed retirement home.

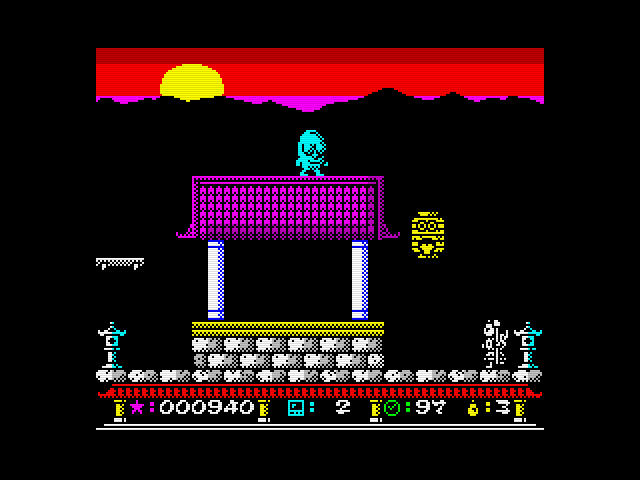

So when the ZX Spectrum was announced, with its rainbow logo and fun, spongy keys, everyone in my class wanted one. It was the first PC that I was interested in and the first that was seen by all as a status symbol. We laughed about the ZX80 and ZX81 that preceded the Spectrum. But here we had something that made sense.

When I heard that my next-door neighbour had one, I made my move. I wasn’t a confident kid, but I had to get my hands on this magical new thing. I didn’t know the boy that well as he was in the year above me, so I asked my mum to ask his mum, and soon I was in. I still remember my first ‘play’ with it (it was always ‘let’s have a play with that’ whenever we wanted to try something that didn’t belong to us). The Spectrum’s keys didn’t allow you to do much practical with it, but it had a cassette game loader and coloured graphics and gave us a hint of what was to come.

My neighbour probably got bored and annoyed with me hanging around, but I was hooked. He had a few games - Hungry Horace, Space Raiders, Buck Rogers - and I marvelled at the fact I was playing arcade quality games in his musty-smelling bedroom. The whole thing felt illicit, dangerous, and entirely new.

I never learned to code. I never spent hours in my bedroom, hunched over an Atari, or Commodore 64, or BBC B, learning the arts that would make the likes of Mark Zuckerberg, Jeff Bezos and Elon Musk the richest men on earth. I've made my peace with that (and who needs their own island and wagyu cattle anyway). But now it seems that my English Literature background was not in vain. As, according to Jensen Huang, CEO of Nvidea, we are all coders now.

In a recent interview, Huang said this:

I'm going to say something and it's going to sound completely opposite of what people feel. You probably recall over the course of the last 10 years, 15 years, almost everybody who sits on a stage like this would tell you it is vital that your children learn computer science. Everybody should learn how to program. And in fact, it's almost exactly the opposite. It is our job to create computing technology such that nobody has to program. And that the programming language, it's human.

Everybody in the world is now a programmer. This is the miracle. This is the miracle of artificial intelligence. For the very first time, we have closed the gap. The technology divide has been completely closed. And this is the reason why so many people can engage artificial intelligence. It is the reason why every single government, every single industrial conference, every single company is talking about artificial intelligence today.

You can watch the YouTube short here:

It’s pretty hard to argue against: I’ve already coded simple games with ChatGPT and can only see that getting easier, as AI finds its way further inside platforms like Github and Replit.

But what is most telling is his point around AI ‘closing the gap’. That AI now becomes our execution layer, our technical expert, the thing that gets shit done. What does this mean for learning? What does this mean for assessment? What does this mean for the notion of the specialist? We’re only just beginning to wrap our heads around these questions, and thus far, none of us have all the answers.

His other bombshell is that programming languages are now ‘human’. Now that we have generative AI that can work out our intentions and that we can chat to about all sorts of things, we just fire away and it understands us and carries out our wishes.

It’s getting better and better. I honestly think prompt engineering is no longer a thing. It died in November 2022, although a few PhDs try desperately to cling onto it as it makes them feel relevant (move over guys, seriously). You can now have a natural conversation with AI, even ask it how to help you. My favourite prompt hack is getting AI to write your prompts for you. Just write something like this into Claude: “Write a detailed, optimised prompt to instruct an LLM to… [add what you want the AI to do]”. Most of the prompts I shared over the last year began like this. Of course, I go back and forth a lot with AI to get them spot on, and always tweak them, but they usually begin their life as the output of Claude (which I generally find the best prompt writer).

It won’t be long before we have true agentic AI, that we can give one command to and that will trundle off and link up with its AI buddies and do all sorts of clever, useful stuff. We can already see it in miniature with a platform like Perplexity, that shows us its workings as it understands your prompt, finds the right webpages, reads them, then gives you the answer. Right now I think Perplexity is the most useful of all the AIs for that reason. We tell it what we want and it works out how to get there.

The reason I refer to the period we are currently in as 'the ZX Spectrum stage of AI’ is because, even though at the time we thought it was amazing, the Spectrum was actually a bit rubbish. There was no mouse or GUI (they came out a year or two later via Apple), so you had to press several keys at once to activate any of the menu commands. It was super slow, prone to crash, and those soft keys were pretty annoying after a while.

But now we have the MacBook M3 and M4 and it won’t be long before we have quantum computing that’s stable enough to link to AI. Then all bets are off. We literally have seen nothing yet. This is why I don’t see the point in sniffily declaring that generative AI is crap (as so many on social media do). Right now they’re right (in part) - compared to where it’s heading, it’s all spongy keys and games that take an hour to load.

So, when Huang (probably the most powerful man on earth, as he’s the chief shovel-maker during this gold rush) tells us that programming is dead, we may want to listen. And think about what that means for our schools.

Here’s another one: the new Apple calculator. Take a look and tell me we’re not moving into a ‘post-answer world’ (thanks to

for this mind-melter).Agentic AI, calculators you can write onto, AI that code for you: where will it end? (Newsflash: it won’t, as this isn’t how progress works. Progress just is.) And, by extension (or regression), where do we begin? This is what we have to get our heads around in the education system. Because if it’s getting easier and easier to have the answer on a plate, and these tools further drop the barriers to getting there, what sorts of struggle should we focus on. What do we actually need our children to do?

I believe we need to move away from worrying so much about our kids having to struggle to a predetermined answer. To move away from this obsession with doing. And to focus on how we create places of learning where we enable children to be the best they can.

It’s a cliche I know, but we are called human beings, not human doings, for a reason. If AI can do more and more of the doing, then we can focus our education system on more of the being skills. Being more empathic, more creative, more collaborative. More human.

I’ve been saying for a while that we need to focus all our attention and energy on making schools places where people want to be, not have to be. I think we do that by focusing on the being. Stop filling out worksheets and doing questions 1-10 and instead give students big, hairy, relevant problems to solve. This week I interviewed a group of Indian high school students who’d used AI to help them solve some genuine global problems: how to remove microplastics from the ocean, how to get earlier warning of the sorts of stress that can lead to teenage suicide. Not a textbook in sight, and certainly no exam. Just team of mission driven teens who want to help make the planet better.

And wow did they learn some stuff during these social action projects. I was blown away. One boy was confidently able to explain how his team used convolutional neural networks with their drones to enable them to identify microplastics. I asked them how on earth they learnt such advanced ideas. Big brothers at uni, YouTube videos, working through problems with AI itself. None of them said school. Let’s get real: most useful learning now takes place outside the classroom. We have to make ourselves relevant again. Or what’s the point of us, other than to babysit children as they learn how to socialise.

So where do we go, now we no longer need to code? When the answer is always and already there? When AI chunks away in the background 24/7, never tiring, never complaining, and always willing to help?

It’s time to reinvent where we begin. It’s time to grow the best humans possible.